..The dismo package is awesome: with some short lines of code you can read & map species distribution data from GBIF (the global biodiversity information facility) easily:

28 Nov 2011

27 Nov 2011

..A Quick Geo-Trick for GoogleMaps in R (using dismo)

... I thought this geocoding-bit might be worth sharing (found HERE when searching the web for dismo-documentation).

25 Nov 2011

24 Nov 2011

A Function for Adding up Matrices with Different Dimensions

I couldn't find a function that can handle matrices with different dimensions and thus coded one myself. It can sum up matrices and also copes with matrices with different dimensions.

16 Nov 2011

Using SyntaxHighlighter and R Brush in Blogger

If you're thinking it is time to give the code examples in your blog a more readable look, you may follow this path and use the SyntaxHighlighter

First thing: check the SyntaxHighlighter Website for the basics.

First thing: check the SyntaxHighlighter Website for the basics.

14 Nov 2011

How to Download and Run Google Docs Script in the R Console

...There is not much to it:

upload a txt file with your script, share it for anyone with the link, then simply run something like the below code.

ps: When using the code for your own purpose mind to change "https" to "http" and to insert your individual document id.

pss: You could use download.file() in this way for downloading any file from Google Docs..

pss: You could use download.file() in this way for downloading any file from Google Docs..

In Reply to Ben Bolker's Post "Google Scholar (still) sucks"

Replying to Ben Bolker's post Google Scholar (still) sucks:

Ben,

thanks for illustrating the issue in your post!

The main purpose of my function GScholarScraper is to retrieve titles - just because this is the best we can get from Google Scholar. Abstracts are truncated and thus shouldn't be used for meta-analysis. Also titles are truncated, as you said, and there is no way around. Though, this is not as often and severe as with abstracts, i.e.

The CSV is optional, the df with word frequencies and the word cloud are always returned - for any other output one can easily add some appropriate lines to the script.

My opinion:

My function is good for a quick summary and illustration of a query-result.

Tony's function is evidently better if you want to pull all fields of a given query (authors, titles, abstracts,..)

I wonder if people came across ROpenSci? I guess that might be very interesting in this context!

Last remark: Of course, a Google Scholar API would resolve all our problems in this regard..

Best,

Kay

Ben,

thanks for illustrating the issue in your post!

The main purpose of my function GScholarScraper is to retrieve titles - just because this is the best we can get from Google Scholar. Abstracts are truncated and thus shouldn't be used for meta-analysis. Also titles are truncated, as you said, and there is no way around. Though, this is not as often and severe as with abstracts, i.e.

The CSV is optional, the df with word frequencies and the word cloud are always returned - for any other output one can easily add some appropriate lines to the script.

My opinion:

My function is good for a quick summary and illustration of a query-result.

Tony's function is evidently better if you want to pull all fields of a given query (authors, titles, abstracts,..)

I wonder if people came across ROpenSci? I guess that might be very interesting in this context!

Last remark: Of course, a Google Scholar API would resolve all our problems in this regard..

Best,

Kay

10 Nov 2011

An Image Crossfader Function

Some project offspin, the jpgfader-function (the jpgfader-function in funny use can be viewed HERE):

Convert Date Field into Year in ArcGis

Objective: a date field [date] with format dd.mm.yyyy, i.e., should be converted to format yyyy.

Solution:

(1) Add an integer field [YEAR] to the attribute table.

(2) Field Calculator: YEAR =

year([date])

9 Nov 2011

Add Transparency to JPEG - Yes, We Can!

...Just read in your JPEG and add an alpha channel manually, then assign values for transparency. Of course for printing you need to use a device that accepts alpha.

See how it's done HERE.

R-Function GScholarScraper to Webscrape Google Scholar Search Result

NOTE: You'll find the update HERE and HERE.

NOTE: The script is currently not working because the code of the Google-Scholar site has changed...

I'll see for this as soon as I find some spare time for it!

NOTE: If you try to access GoogleScholar programatically consider this words of caution:

http://stackoverflow.com/questions/7523961/google-scholar-with-matlab/7587994#7587994

...

Based on my previous post on Web Scraping I coded and uploaded the Function "GScholarScraper" HERE for testing!

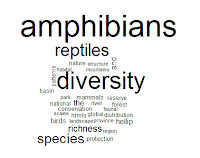

The function will pull all (!) results, processing pages in chunks of 100 results/titles, and return a file with all titles, links, etc. It will also produce a word cloud using the words in the publication titles.

Please try your own search strings and report errors, etc.!

Build and run properly under:

R version 2.13.0 (2011-04-13) and R version R-2.13.2 (2011-09-30)

Platform: i386-pc-mingw32/i386 (32-bit) locale:

[1] LC_COLLATE=English_United States.1252

[2] LC_CTYPE=English_United States.1252

[3] LC_MONETARY=English_United States.1252

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] stringr_0.5 tm_0.5-6 wordcloud_1.2 Rcpp_0.9.7

loaded via a namespace (and not attached):

[1] plyr_1.5.1 slam_0.1-23

PS: Errors reported lately (see comments) were resolved, the source code was updated..

NOTE: The script is currently not working because the code of the Google-Scholar site has changed...

I'll see for this as soon as I find some spare time for it!

NOTE: If you try to access GoogleScholar programatically consider this words of caution:

http://stackoverflow.com/questions/7523961/google-scholar-with-matlab/7587994#7587994

...

Based on my previous post on Web Scraping I coded and uploaded the Function "GScholarScraper" HERE for testing!

The function will pull all (!) results, processing pages in chunks of 100 results/titles, and return a file with all titles, links, etc. It will also produce a word cloud using the words in the publication titles.

Please try your own search strings and report errors, etc.!

Build and run properly under:

R version 2.13.0 (2011-04-13) and R version R-2.13.2 (2011-09-30)

Platform: i386-pc-mingw32/i386 (32-bit) locale:

[1] LC_COLLATE=English_United States.1252

[2] LC_CTYPE=English_United States.1252

[3] LC_MONETARY=English_United States.1252

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] stringr_0.5 tm_0.5-6 wordcloud_1.2 Rcpp_0.9.7

loaded via a namespace (and not attached):

[1] plyr_1.5.1 slam_0.1-23

PS: Errors reported lately (see comments) were resolved, the source code was updated..

Labels:

Google Scholar

,

GScholarScraper()

,

R

,

R Graphs

,

readLines()

,

Web Scraping

,

Word-Cloud

5 Nov 2011

Next Level Web Scraping

The outcome presented above will not be very useful to most of you - still, this could be a good example for what possibly can be done via webscraping in R.

Background: TIRIS is the federal geo-statistical service of North-Tyrol, Austria. One of many great things it provides are historical and recent aerial photographs. These photographs can be addressed via URL's. This is the basis of the script: the URL's are retrieved, some parameters are adjusted, using the customized addresses images are downloaded and animated by saveHTML from the Animation-Package. The outcome (HTML-Animation) enables you to view and skip through aerial photographs of any location in North-Tyrol, from the year 1940 to 2010, and see how the landscape, buildings, etc. have changed...

View the script HERE.

3 Nov 2011

Some Simple but Propably Useful Regex Examples with R-Package stringr...

I found that examples for the use of regex in R are rather rare. Thus, I will provide some examples from my own learning materials - mostly stolen from the help pages, with small but maybe illustrative adaptions.

ps: I will extent this list of examples HERE occasionally..

1 Nov 2011

Webscraping Google Scholar & Show Result as Word Cloud Using R

NOTE: Please see the update HERE and HERE!

...When reading Scott Chemberlain's last post about web-scraping I felt it was time to pick up and complete an idea that I was brooding over for some time now:

When a scientist aims out for a new project the first thing to do is to evaluate if other people already have come along to answer the very questions he is about to work on. I.e., I was interested if there has been done any research regarding amphibian diversity at regional/geographical scales correlated to environmental/landscape parameters. Usually I would got to Google-Scholar and search something like - intitle:amphibians AND intitle:richness OR intitle:diversity AND environment OR landscape - and then browse thru the results. But, this is often tedious and a way for a quick visual examination would be of great benefit.

...When reading Scott Chemberlain's last post about web-scraping I felt it was time to pick up and complete an idea that I was brooding over for some time now:

When a scientist aims out for a new project the first thing to do is to evaluate if other people already have come along to answer the very questions he is about to work on. I.e., I was interested if there has been done any research regarding amphibian diversity at regional/geographical scales correlated to environmental/landscape parameters. Usually I would got to Google-Scholar and search something like - intitle:amphibians AND intitle:richness OR intitle:diversity AND environment OR landscape - and then browse thru the results. But, this is often tedious and a way for a quick visual examination would be of great benefit.

Labels:

Google Scholar

,

grep()

,

HTML

,

R

,

readLines()

,

str_match_all()

,

String-Manipulation

,

stringr

,

strsplit()

,

Word-Cloud

Subscribe to:

Posts

(

Atom

)